AI War Crimes: The Law Already Has the Tools to Stop Them. So Why Is No One Using Them?

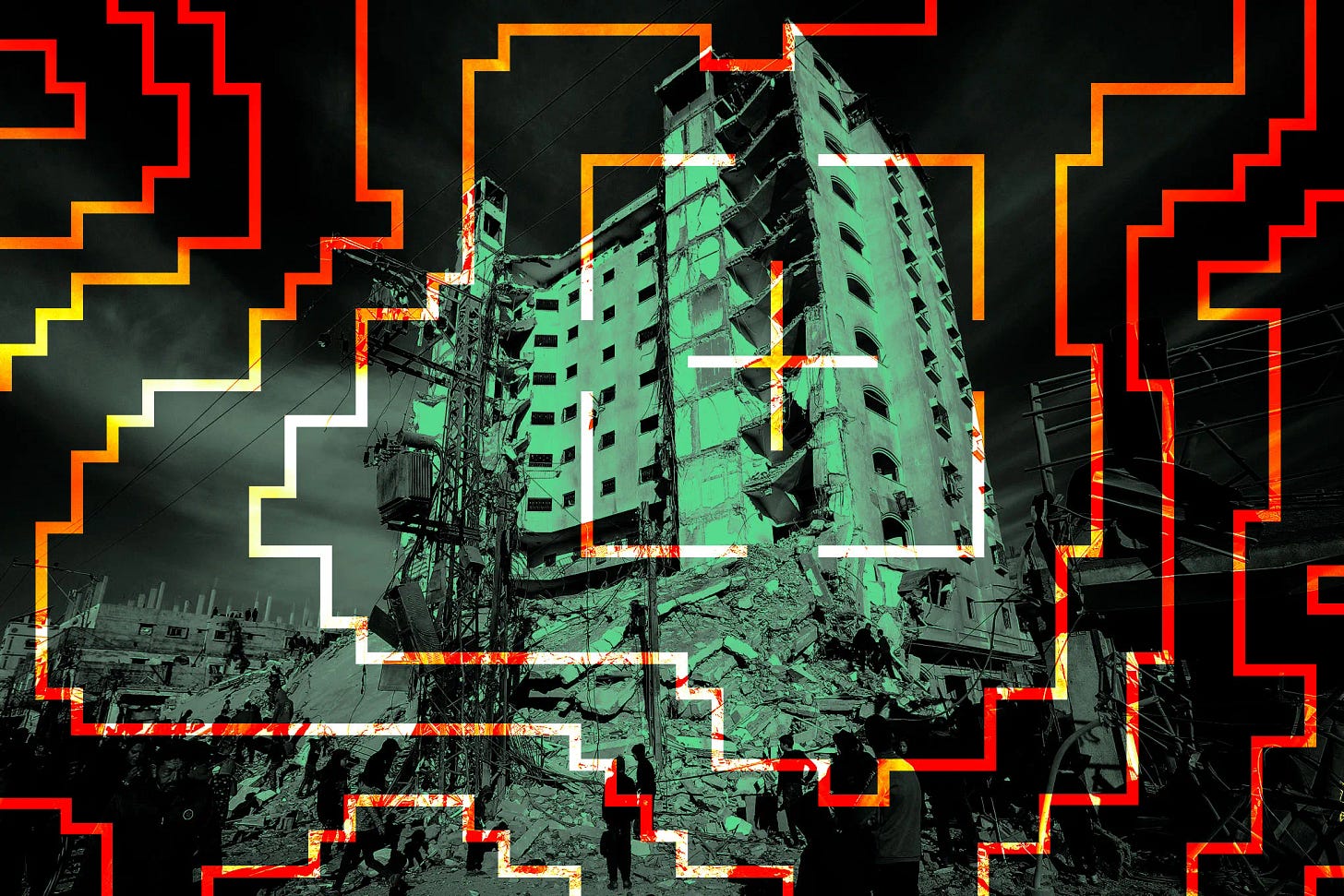

The wars in Gaza and Iran have made the question urgent: who is legally accountable when an algorithm kills the wrong person?

Where, exactly, are the human rights defenders? Human Rights Watch, Amnesty International, and the rest of the alphabet soup of NGOs — are they too busy filing discrimination complaints in European courts, or angling for their next tranche of public subsidies as “trusted flaggers” under the EU’s Digital Services Act? Because something far more consequential has been unfolding.

The world is confronting a question as fundamental as the ones posed by chemical weapons after the First World War, and by nuclear and biological arsenals after the Second: the question of automated AI targeting and autonomous weapons.

The Anthropic Affair: A Window Into the Problem

The clearest illustration of what’s at stake is the dispute between Anthropic — maker of the Claude AI — and the Pentagon.

In July 2025, Anthropic signed a $200 million contract with the U.S. Department of Defense. Claude became the only AI engine cleared for use in classified environments. The dispute that followed centered on Anthropic’s insistence on maintaining restrictions on military use — specifically, its refusal to allow its technology to be deployed in autonomous weapons systems or for mass surveillance.

On February 24, 2026, Defense Secretary Pete Hegseth issued an ultimatum to Anthropic CEO Dario Amodei: remove all restrictions by February 27, or face the consequences. Amodei refused. President Trump then ordered the federal government to stop using Anthropic’s products entirely, granting the Pentagon six months to transition to alternatives. Hegseth designated Anthropic a “supply chain risk” — a label never previously applied to an American company — effectively barring Pentagon contractors from using its products as well.

Anthropic has since filed two separate lawsuits against the Department of Defense: one alleging a First Amendment violation, the other contesting the “supply chain risk” designation. The company says the ban could cost it billions. OpenAI — Sam Altman’s peculiar for profit NGO — wasted no time filling the void, striking a deal with the Pentagon to replace Anthropic.

Anthropic’s stand deserves credit. But let’s be clear: it is not purely altruistic. It is also a calculated response to the enormous legal exposure — civil and criminal — that unrestricted military AI use would create for the company and its executives. As you will see, that exposure is real and substantial.

A Brief, Damning History of Automated Killing

First, some necessary context. AI is a massive financial bubble, and it has very little to do with intelligence in any meaningful sense. It is, at its core, an additional layer of automation — sophisticated pattern-matching dressed up in the language of cognition.

Automated targeting — striking a target without direct human visual confirmation on the ground — was pioneered by Barack Obama in his drone campaign across Pakistan’s tribal belt, beginning in 2009. The program’s “yield rate” was approximately 6%. That means 94% of those killed were innocent civilians. Thousands of war crimes, methodically administered.

The acceleration begins in 2004 — a year worth examining closely. That was when DARPA’s Total Information Awareness1 program was officially cancelled, then quietly privatized under the stewardship of the man who built it: Admiral John Poindexter. The name should ring a bell. Reagan’s former National Security Advisor. A central figure in the Iran-Contra affair. And at that moment, head of DARPA’s Information Awareness Office.

The program didn’t die — it dispersed. Its research was handed off to the NSA, while another slice seeded a young startup built on PayPal’s anti-fraud system: Palantir, bankrolled by Peter Thiel and the CIA.

The same year, a second DARPA project — LifeLog2 — met an identical fate, shut down on the very day Facebook launched. No direct causal link has ever been formally established. But in 2015, the program’s former director told Vice that Facebook had effectively become, in practice, the closest real-world approximation of what LifeLog was designed to be. Make of that what you will.

Fast forward to October 7, 2023, and Israel’s campaign in Gaza. The Israeli military’s Lavender automated targeting system — allegedly developed by the Unit 8200 — targeted and “handled” an estimated 37,000 individuals classified as Hamas combatants, according to the IDF’s own figures (which should be treated with considerable skepticism; the real number is likely far higher). The system’s admitted error rate was 10%, meaning approximately 3,700 innocent civilians were individually identified, targeted, and killed by mistake. War crimes.

Lavender works by ingesting mass surveillance data on the majority of Gaza’s 2.3 million residents, then scoring each individual on a scale of 1 to 100 according to their estimated likelihood of active membership in Hamas’s military wing or Palestinian Islamic Jihad. Think of it as a credit score — except the consequence of a bad rating is not a rejected loan application. It is death by missile.

The system’s accepted collateral damage thresholds are staggering: up to 15 to 20 civilians killed for each low-ranking Hamas fighter eliminated; over 100 civilian deaths deemed acceptable for a senior commander. War crimes, compounded at industrial scale. The total number of innocent civilians killed in Gaza now exceeds 70,000.

Iran: The Next Laboratory

The unprovoked war of aggression Israel and the United States have waged in the Persian Gulf has provided an even larger testing ground for automated targeting technology — an entire country the size of Iran. Among its documented results: a “double-tap” strike (two successive strikes, the second deliberately targeting rescuers) on a girls’ school in Minab, in southern Iran, killing at least 168 people. A war crime by any standard.

The companies supplying these targeting capabilities are well known.

The most prominent is Palantir. According to UN Special Rapporteur Francesca Albanese, there are “reasonable grounds” to believe Palantir provided predictive surveillance technology, a “central defense infrastructure,” and the AI platform powering Lavender, Gospel3, and the system known as “Where’s Daddy.4” Palantir has also provided tools enabling the Israeli military to process vast quantities of data for special forces deployment planning and troop logistics — tools that facilitated the commission of war crimes.

The same applies, at an even larger scale, to the Iran campaign.

The Legal Case That Isn’t Being Made — But Should Be

This brings us back to the human rights NGOS.

French courts have held universal jurisdiction over war crimes, crimes against humanity, and genocide since May 12, 2023. German and Spanish courts operate under even fewer procedural constraints. Universal jurisdiction — the principle permitting courts to prosecute crimes committed abroad, by foreigners, against foreigners — is not a theoretical novelty. It is an established legal mechanism that has simply not been used here.

French criminal law on complicity (Articles 121-6 and 121-7 of the Penal Code) allows the prosecution of any individual who contributed to an offense, even without directly committing it.

NGOs such as Amnesty International and the Ligue des droits l’Homme could file criminal complaints with civil party status in France, Germany, and Spain — complaints that automatically trigger a formal judicial investigation. They could do so tomorrow.

The defendants would include, for war crimes directly committed:

Benjamin Netanyahu, his cabinet, and the entire Israeli military high command. Donald Trump and his administration — specifically JD Vance, Marco Rubio, Pete Hegseth, Tulsi Gabbard, David Sacks, the directors of all intelligence agencies, and the Joint Chiefs of Staff.

And for complicity in war crimes:

Alex Karp and all of Palantir’s executive leadership, along with Peter Thiel. Sam Altman and OpenAI’s leadership. Every other exécutive of any other company that provided technical targeting capabilities used in these campaigns.

Why This Matters Beyond the Courtroom

The value of launching such prosecutions is threefold.

First, it would force a rigorous, public and legal reckoning with the specific criminal liabilities attached to automated targeting systems and autonomous weapons — laying the groundwork for the international convention that doesn’t yet exist but desperately needs to.

Second, it would materially increase the criminal risk faced by states deploying AI targeting and companies profitting by supplying the tools — raising the cost of the next atrocity while that convention is being negotiated.

Third, it would begin to loosen America’s stranglehold on European AI policy, by holding U.S. political and corporate decision-makers personally accountable. It would create structural incentives favoring ethical AI and disadvantaging systems like Open AI and Palantir participating in autonomous killing.

Yes, the chorus of the faint-hearted will object that this is pointless — the United States doesn’t recognize international law when it’s inconvenient. They have short memories. George W. Bush has not left the United States since 2009, when a Spanish criminal investigation for war crimes related to Guantánamo torture was opened against him. The risk of arrest abroad is not theoretical.

Now Imagine every name listed above subject to a criminal investigation, announced publicly — because a civil party complaint in France automatically opens a judicial inquiry. Imagine Interpol red notices issued when those persons of interest, summoned for questioning by French, German or Spanish investigative magistrates, fail to appear. Imagine the diplomatic and political reverberations on the international stage. Imagine global techno-Nazis forced to stay local by fear of an arrest.

Washington will bring every pressure it can bear to kill the proceedings. That is a given. But consider the political arithmetic. Trump faces midterm elections in November. Republican strategists privately acknowledge the possibility of losing the Senate as well as the House. The scenario ends with demands for Trump’s resignation to avoid prosecution — the Nixon playbook, updated.

The tools exist. The crimes are documented. The legal pathways are open.

What are the NGOs waiting for?

The Information Awareness Office attempted to achieve "Total Information Awareness" by creating enormous computer databases to gather and store the personal information of everyone in the United States — including personal emails, social networks, credit card records, phone calls, medical records, and numerous other sources — without any requirement for a search warrant. This information would then be analyzed to look for suspicious activities, connections between individuals, and "threats." The program also included funding for biometric surveillance technologies that could identify and track individuals using surveillance cameras and other methods.

The goal was to develop software that deduces behavioral patterns from monitoring people's daily activities. More specifically, it sought to "build a database tracking a person's entire existence" — including relationships, communications, media consumption habits, purchases, and behavior — in order to build a digital record of "everything an individual says, sees, or does."

AI targeting of buildings and infrastructure.

“Where’s Daddy?” is a tracking system designed to monitor individuals already flagged by Lavender, and to signal to the military the precise moment those targets entered their family homes — at which point a strike would be made.